Starter

A few days ago Simon Brown posted a thoughtful piece called “Package by component and architecturally-aligned testing.” The first part of the post discusses the tensions between the common packaging approaches package-by-layer and package-by-feature. His conclusion, that neither is the right answer, is supported by a quote from Jason Gorman (that expresses the essence of thought over dogma):

The real skill is finding the right balance, and creating packages that make stuff easier to find but are as cohesive and loosely coupled as you can make them at the same time

Simon then introduces an approach that he calls package-by-component, where he describes a component as:

a combination of the business and data access logic related to a specific thing (e.g. domain concept, bounded context, etc)

By giving every component a public interface and package-protected implementation, any feature that needs to access data related to that component is forced to go through the public interface of the component that ‘owns’ the data. No direct access to the data access layer is allowed. This is a huge improvement over the frequent spaghetti-and-meatball approach to encapsulation of the data layer. I like this architectural approach. It makes things simpler and safer. But Simon draws another implication from it:

how [do] we mock-out the data access code to create quick-running “unit tests”? The short answer is don’t bother, unless you really need to.

I tweeted that I couldn’t agree with this, and Simon responded:

This is a topic that polarises people and I’m still not sure why

Main course

I’m going to invoke the rule of 3 to try and lay out why I disagree with Simon.

Fast feedback

The main benefit of automated tests is that you get feedback quickly when something has gone wrong. The longer it takes to run the tests, the longer it’ll take before you get feedback. Your ‘slow’ tests might give you useful feedback quite quickly, but your ‘fast’ tests should give you feedback faster. I want to get feedback as fast as possible. I like the tests to run in the background as I type. I certainly want them to run on my desktop before I check in code.

I’m not saying longer running tests aren’t valuable – they are. But if I can write a test that gives me meaningful feedback faster, then I want to write that test. Frequently that will mean replacing the ‘slower’ parts of my system with something faster (such as a stub).

Design damage fallacy

DHH talked about test-induced design damage, but I don’t recognise tests as an inherent cause of damage any more than I recognise design patterns as the cause of pattern-induced design damage. We’re all human and we can get things wrong. You can go crazy with a dependency injection (DI) framework and inject a zillion dependencies, but that’s not the fault of the framework – it’s you not understanding how to write maintainable, modular software.

There are plenty of ways to move your code away from implicit, tightly coupled dependencies towards a style where it’s more loosely coupled and dependencies are explicit. Is this design damage? Not in my experience. Apart from giving us the ability to write fast, isolated tests this sort of decoupling also forces us to think about roles and responsibilities and express them in our architecture. Win-win.

Consistency of message

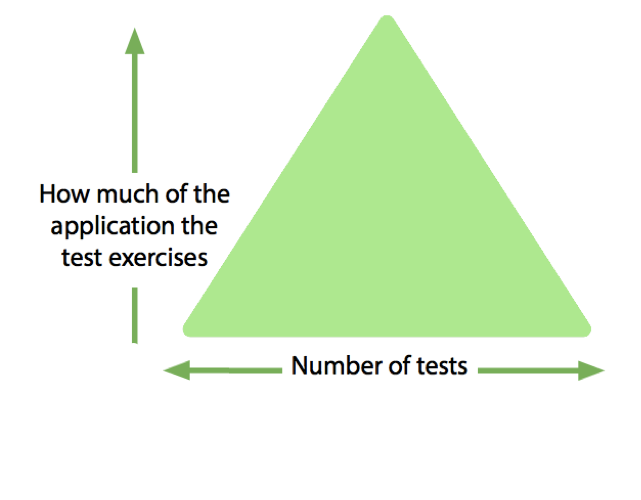

The testing pyramid was never a pyramid – it was always a triangle. It was always an approximation of the world. The words inside the triangle have always been problematic and I don’t use them any more. Instead I rely on labelled axes to get the meaning across:

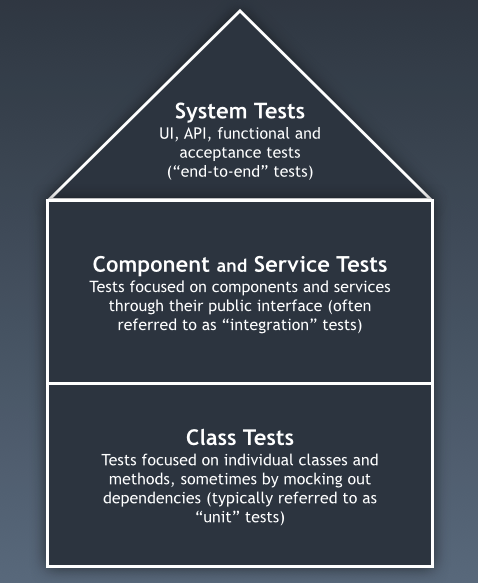

I just don’t think that Simon’s house-shaped diagram helps make things clearer:

Should there be the same number of “Class” tests as there are “Component” or “Service” tests? Is the distinction between “Component” tests and “Class” tests meaningful? I would answer both of these questions with a resounding “No!”

For me, the simplicity of the empty triangle conveys the spirit of the message (fewer large tests, more smaller tests), it remains consistent with most textbooks and blogs and, most importantly, doesn’t rely on interpretations of “component” or “unit.”

Afters

Tests are examples of how our systems work. They document its behaviour and its usage. They tell us when things have been broken. I think Simon and I agree on these points.

We also agree, I think, that mindless writing of tests (or any code) generally leads to a mess that we (or someone else) has to clean up later. A bad test is a bad test – so write good (useful, maintainable, repeatable, necessary) tests. Not all fast tests are good – not all slow tests are bad – not everything has to be mocked. If a test doesn’t help you learn something about the system, then delete it!

Simon’s (and Jason’s) packaging advice is good. But, before you extrapolate to conclude that his advice about not bothering to write small, fast tests is also good, think about your specific context. Consider how valuable fast feedback is, and whether you’d be prepared to wait longer before you know whether you’ve broken something.

Good architecture and small, fast tests are not mutually exclusive.

Leave a Reply